- INSTALL APACHE SPARK DOCKER IMAGE IN WINDOWS HOW TO

- INSTALL APACHE SPARK DOCKER IMAGE IN WINDOWS INSTALL

- INSTALL APACHE SPARK DOCKER IMAGE IN WINDOWS DRIVERS

- INSTALL APACHE SPARK DOCKER IMAGE IN WINDOWS UPDATE

RUN echo debconf shared/accepted-oracle-license-v1-1 seen true | sudo debconf-set-selections RUN echo debconf shared/accepted-oracle-license-v1-1 select true | sudo debconf-set-selections

INSTALL APACHE SPARK DOCKER IMAGE IN WINDOWS UPDATE

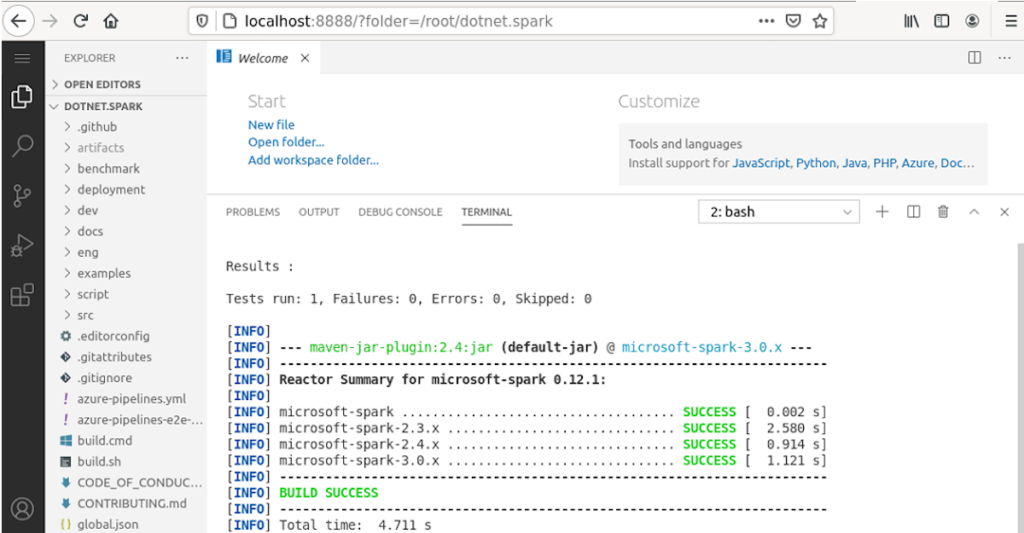

RUN apt-get update -y#automatically agreeing on oracle license agreement that normally pops up while installing java8 RUN apt-add-repository ppa:webupd8team/java -y >code class="bash plain"#RUN apt-get >code class="bash plain">software-properties-common -y Spark Dockerfile #specifying our base docker-image We start by Ubuntu-14.04 as our base image and incrementally RUN commands that assembles our desired image, mainly installing java and downloading a pre-compiled version of Spark once these are in place we shall be ready to start any of our spark components. In this section we provide and describe the Dockerfile that automates the creation of the Spark Docker image. use the driver Dockerfile to build a Spark-driver encapsulating a simple WordCount application and run it on top of the deployed cluster.provide a Dockerfile that extends the base Spark Docker image to a generic Spark-driver that is suitable for running a Spark application as a ‘fat jar’.provide the Docker instructions necessary to build the image, and deploy a standalone cluster composed of a master and n slave nodes and run an interactive Spark-shell on top of deployed cluster.provide a Dockerfile for a Spark Docker image, that is suitable for running either a single master, a single slave, or an interactive spark-shell in the standalone mode.The only requirement to get a Spark node up and running is to have Java installed and a compiled version of Spark for the standalone cluster, it’s indeed essential to have all the nodes running the same Java and Spark versions, and able to actively communicate with each other. Spark provides a very simple standalone deployment mode. While more organizations are turning into Spark and considering the high development pace carried within the platform, with new features and bug fixes added and released every couple of weeks, a convenient way for testing the platform and realizing newly added features is crucial. This is where the provided Spark Docker image take place. According to the Hadoop survey carried by syncsort earlier this year, 70% of the survey participants showed most interest in Apache Spark higher than the current adoption leader MapReduce.

INSTALL APACHE SPARK DOCKER IMAGE IN WINDOWS DRIVERS

Additionally, we provide two examples for running (1) an interactive Spark-shell and (2) a WordCount Spark Application on top of the cluster. The main technology drivers for this guide are Docker and Spark we expect the readers to be familiar with the Docker-engine, Apache Spark and its installation in standalone mode.Īpache Spark has been an active open source project since 2010, with continuously growing attention in the big data world. In order to be able to create and/or publish an image, you need to set the DockerHub credentials DOCKER_USERNAME, DOCKER_PASSWORD, DOCKER_EMAIL variables as environment variables.In this post we provide a comprehensive guide to building a Spark Docker image and using it for the provisioning of a ‘standalone’ Spark cluster composed of a master node and an indefinite number of slave nodes each running within its own Docker container. Creating and Publishing Zeppelin docker image

INSTALL APACHE SPARK DOCKER IMAGE IN WINDOWS INSTALL

You need to install docker on your machine.

INSTALL APACHE SPARK DOCKER IMAGE IN WINDOWS HOW TO

It mainly provides guidance into how to create, publish and run docker images for zeppelin releases. This document contains instructions about making docker containers for Zeppelin. Writing Zeppelin Application (Experimental).Writing Zeppelin Visualization (Experimental).Zeppelin on Spark Cluster Mode (Standalone).Interpreter Execution Hooks (Experimental).Install Zeppelin with Flink and Spark Clusters Tutorial.